|

PROJECTS AND DEMONSTRATIONS Here, you have several projects and demos I am currently involved in or I have been involved in the past: FEMVoQ: Three-dimensional finite element simulation of voice quality: influence of phonation types and vocal tract shaping In this project, we aim at significantly increasing the number and types of 3D generated spoken utterances that have been reported to date in literature producing those sounds and surpassing the current state of the art of 3D vocal tract (VT) acoustics, without resorting to supercomputer facilities. Moreover, we also wish to endorse these utterances with some voice qualities (VoQ), whichs arise from variations in the phonation type and in the VT shape, from the well-known Lombard effect, to the singing formant that allows for a better voice projection, or to speaking in sad or aggressive styles, to name a few.

GENIOVOX: Computational generation of expressive voice In this project, we aim at the computational generation of expressive voice by following a hybrid approach. We will skip the inherent limitations of voice corpus but benefit from transformation voice techniques developed in that area. The key idea will be to map the parameter modifications in recorded voice, which are responsible for expressive effects, into the glottal pulses models and vocal tract geometries. The former are used as boundary conditions at the glottis in numerical simulations of vocal tract acoustics. Vocal tract geometries will vary in time to achieve the desired expressive effects.

DYNAMAP: DYnamic Acoustic MAPping - Development of low cost sensors networks for real time noise mapping DYNAMAP is a LIFE+ project aimed at developing a dynamic noise mapping system able to detect and represent in real time the acoustic impact of road infrastructure. The main objective of this project is to ease and reduce the cost of periodically updating noise maps, as required by the European Directive 2002/49/EC on environmental noise. To that end, an automatic monitoring system, based on customised low-cost sensors and a software tool implemented on a general purpose GIS platform, will be developed and built in two pilot areas located along the A90 motorway that surrounds the city of Rome (Italy), and inside the agglomeration of Milan (Italy). A one-year survey will then be undertaken to check the reliability, effectiveness and efficiency of the DYNAMAP system.In this project, we will develop an Anomalous Noise Events Detection Algorithm (ANED) to identify and discard the events that are not representative of road traffic noise (denoted as anomalous events) and that, as a consequence, distort the noise levels measured by the sensors.

EUNISON: Extensive UNIfied-domain SimulatiON of the human voice In the EUNISON project, we seek to build a new voice simulator that is based on physical first principles to an unprecedented degree. From given inputs, representing topology or muscle activations or phonemes, it will render the 3-D physics of the voice, including of course its acoustic output. This will give important insights into how the voice works, and how it fails. The goal is not a speech synthesis system, but rather a voice simulation engine, with many applications; given the right controls and enough computer time, it could be made to speak in any language, or sing in any style. KTH coordinates this project, with a budget of 2.96 million euros.In this project, Dr. Oriol Guasch is the Scientific coordinator, and our team leads Work packages 5 (Simulations of the Vocal Tract) and 8 (Dissemination).

THOFU: Technologies for the HOtel of the FUture The main objective of this project is to design the hotel of the future, since its construction, its parts, the interaction with users, security and integration with its surroundings and the Internet. Gesfor Group leads this project with a budget of 23 million euros.In the project, our team will be involved in the work-package related to intelligent and adaptive interfaces within a high-tech hotel, researching on new paradigms of interaction and studying usability and user experience.

CreaVeu: From any text to any voice The main objective of this project is to integrate text-to-speech and voice conversion technologies, besides providing an intuitive graphic user interface that allows a non-expert user to adapt the main expressive characteristics of the synthesized voice to a given target (timing, character, etc.). The main domain applications of this technology are entertainment, videogames, etc.

EmoLib: Emotion identification from text EmoLib is a library that extracts the affect and emotions from an incoming text by tagging such text according to the feeling that is written or being conveyed. EmoLib has been coded in the Java programming language.

evMIC: Multimodal, Immersive and Collaborative Virtual Environments The main objective of this project is to create an interoperable platform, user-centric, allowing the creation of virtual learning environments, overcoming the current limitations and aligning with the current definition of what will be "The Future Internet."Besides contributing to state-of-the-art documents on speech technologies, multimodal processing and graphics and virtual reality, our team is going to participate in developing interfaces to interact with the virtual environment involving expressive text-to-speech synthesis, multimodal affect analysis, and 3D avatars modelling and synthesis.

INREDIS: INterfaces for RElations between Environment and people with DISabilities The main objective of this project consists of developing grounding technologies to allow creating communication and interaction channels between disabled people and their environment. Technosite leads this project with a budget of 23.6 million euros.Besides contributing to detailed state-of-the-art documents on speech technologies, multimodal processing and graphics and virtual reality, our team is going to participate in developing applications involving expressive text-to-speech synthesis, multimodal affect analysis, and 3D avatars modelling and synthesis.

MAGNUS: Mouse Advanced GNU Speech It is a speech controlled mouse pointer application through Catalan voice commands. This application aims to provide oral accessibility for people with reduced mobility.This project has been developed by Alexandre Trilla within his Master Thesis. Project members:

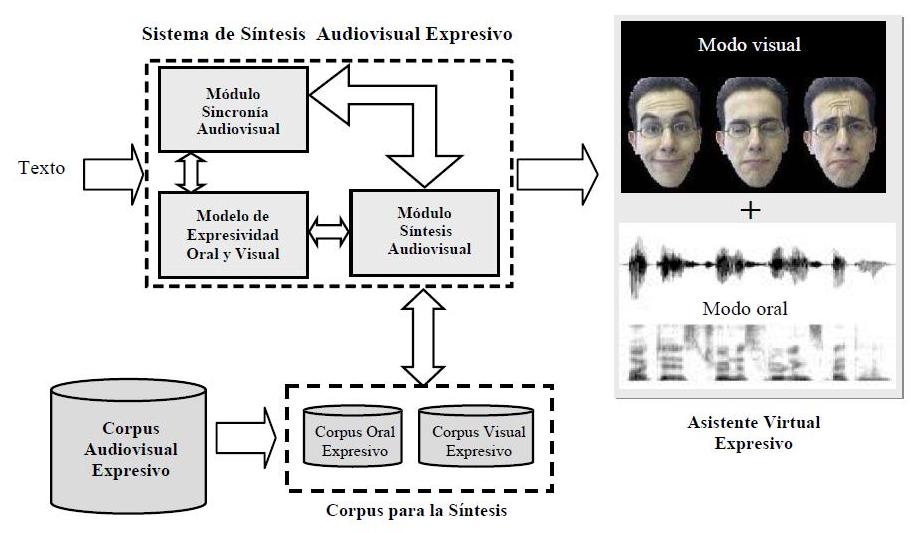

SAVE: Expressive AudioVisual Synthesis The project is focused on the research of a multimodal output interface with high expressivity content, which makes it possible to give a high naturality perception to the end user. The project proposes the study and development of a novel expressive audiovisual synthesis system based on a photo realistic talking head.

Project granted by the Spanish Ministry of Science and Technology (TEC2006-08043/TCM). Period: 2007 - 2009 SALERO: Semantic AudiovisuaL Entertainment Reusable Objects Our group is involved in this project to develop innovative Multilingual Text-to-Speech techniques for the achievement of expressive speech synthesis in the cross media-production framework (e.g. movies, games, broadcast, etc.)

Sam, the Virtual Weatherman Automatic service for weather forecast on demand (TV, Internet and mobile devices) by means of a virtual speaker called Sam.Our group has developed the corpus-based Text-to-Speech system embedded in the forecast application. Project members:

IntegraTV-4all Adapted leisure, information and remote assistance services via a television set, with advanced natural language voice communication functionalities for people with sensory disabilities and the aged.Our group has developed an audio-visual alarm clock, which integrated in the hotel TV menu, as a result of improving our previous virtual speaker (see virtual speaker section). Project members:

VIRTUAL SPEAKER In this project, we developed an expressive realistic virtual speaker in 2D based on image processing and text-to-speech synthesis in Catalan, Spanish and English:

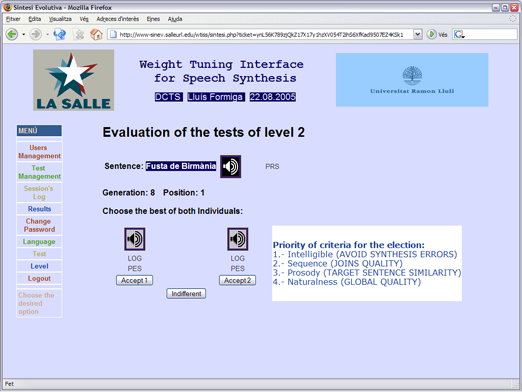

WEIGHT TUNING INTERFACE FOR SPEECH SYNTHESIS (CATALAN) This is a web platform, based on evolutive computation, which has beend designed to find the optimal weight configuration of the cost function for unit selection text-to-speech synthesis:

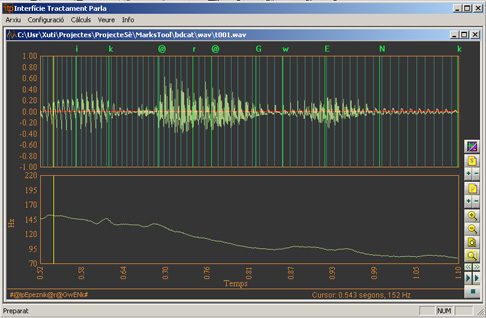

ITP - Speech Processing Interface This is an interface for speech labelling (automatic and/or manual). Pitch marks, phoneme boundaries, pitch curve, spectogram and prosodic features are some of the speech parameters that can be extracted by means of this interface.It runs under Windows platforms.

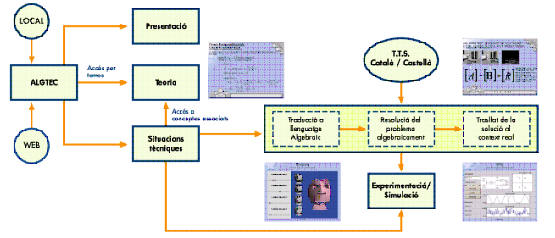

ALGTEC (ALGEBRA & TECHNOLOGY) ALGTEC is a multimedia application that helps and motivates the engineering student for the learning of Algebra concepts. It describes some Algebra concepts applied to technologic situations by means of a virtual teacher.

|